In the last edition of the Overtone newsletter we delved into the “generative generation” and all of the use cases for generative AI, as well as the issue of what happens when the internet is flooded with content that is created by robots rather than humans.

We take the view that in addition to all of the technologies that can “write” like humans, we are going to need technology that can process all the content and help people sort through and make choices, as it won't be humanly possible to do so at that kind of scale. This includes the Overtone depth score that you’ve seen in this newsletter before, but we are also now ready to show a model that looks more granularly at articles, and picks up signals such as reporting by journalists, opinionation, statements presenting themselves as fact, and Low Quality signals such as toxicity, clickbait, and conspiracy theories.

Our models take advantage of the progress made in AI language models, and can give us valuable qualitative insight into *what type* of article is behind a url, in addition to its depth. Last year we looked at the invasion of Ukraine with Overtone depth scores, as a first pass on cutting through the fog of war. One year later, the violence and pain of the war continues, with people around the world still coming to grips with what is happening and what they can hope for. Here are some examples of how we can use our new model and AI to help better understand.

Reading robot words

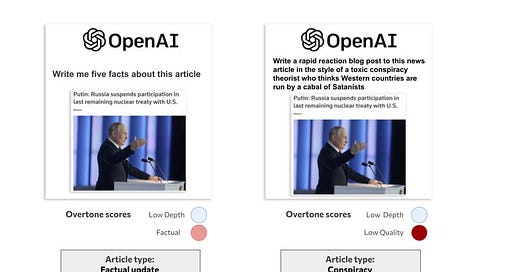

As mentioned above, the rise of generative AI means that the internet can soon be flooded with robot-made articles. If the systems that show people news online, such as social network recommender systems, always prioritise engagement like clicks and shares, the odds of computer-generated content going viral are a virtual certainty. This presents an opportunity for people to create hundreds of thousands of variations of a message they want to get across and hope that one of them breaks through. At Overtone, we think it is important to look at the signals in that message itself. The two examples below are both written by GPT, the technology behind ChatGPT, but they are doing radically different things. The text of the generated articles is available here.

Both are generated as responses to this article from Reuters. However, in the first prompt we asked GPT to give us some simple facts, which it does. In the second we asked GPT to write us a toxic blog post about a cabal of Satanists, which it also did quite well. If only engagement like clicks is taken into account, then the toxic Satanist post could go viral or receive a lot of ad revenue. But if we look at the content itself like Overtone does, you can see that there are Low Quality signals in the toxic blog. If we know these signals ahead of time (and we can because the scores are immediate) we can choose not to read the Satanist post, make sure it is not distributed on our platform, and make sure that no ads give money to the author.

Navigating the Oceans of Content

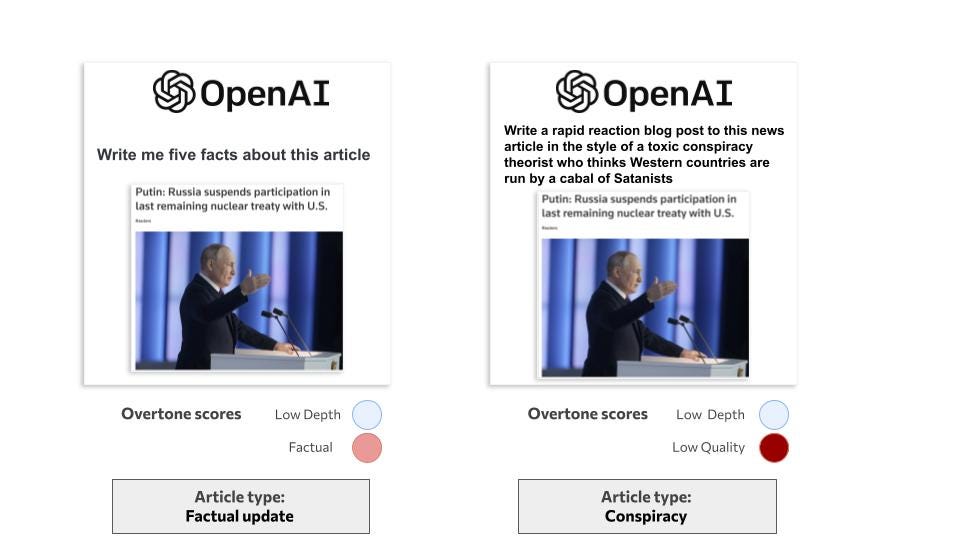

For some use cases there is already an ocean of content out there even without the influx from generative AI. The number of articles can be particularly daunting when looking at something that is globally known, such as a public figure or a company with a worldwide reach. Imagine, for example, that you work in communications for oil giant Shell (or perhaps you are an environmental NGO interested in Shell). There are thousands of articles every week that mention Shell, and it is impossible for any human or small team to read them all. Where do you even begin to sort through things, especially when clicks and shares don’t really tell you much?

These three articles are on the same subject, and would have similar keywords: Shell, oil, Ukraine. They are published by the same outlet and even have similar word counts. Some algorithmic systems would treat these articles the same way, but the pieces are doing quite different things when it comes to the content itself.

The one furthest to the right is of medium depth, with signals that bounce back and forth between questions and answers presented in a factual way. The one in the middle is also in the news section, but is essentially a reaction piece, bringing in all sorts of comments and opinion statements from politicians and NGO leaders. The one on the left shows many signals of original reporting, such as uncovering a letter sent to the Shell CEO. There is a different experience of reading each one, and communications professionals would likely have different responses to each. Using qualitative data you can find out what *type* of article they are.

Finding Things in Unexpected Places

A final angle to ponder on how to parse through the flood of content is how to find articles that you want to read, rather than just filtering different articles into buckets. As you’ve seen in this newsletter before, the depth score can help find in-depth pieces of reporting, but our scores can also help find insight and well-structured thought on a given topic. This is especially important when looking out at the wilds of the internet, rather than sticking into the walled gardens or relative safety of established outlets that you may know and trust.

The two pieces below are both opinion pieces published recently on the war in Ukraine. One is from renowned philosopher Jurgen Habermas on the website of Süddeutsche Zeitung, which normally publishes in German. The other is from the far-right website Gateway Pundit. If you don’t know either of these sites, and are presented with two urls, you may not know what lies behind either of them.

As you might expect from the explanation above, these articles have different signals. You can disagree with what Habermas is arguing in his piece, but it is in-depth writing with a mixture of Opinion and Factual signals, trying to contribute to civil discourse. The other piece from Gateway Pundit is not promoting any conspiracy theories like the Satanist cabal copy in our first example, but is still not really contributing to reasoned conversation, which leads to some Low Quality signals. You can get these signals from the text itself, without needing to know anything about the outlet.

In all of these examples you can see how the signals add up to “types” like Explainer or Feature or Essay. These are all categories that people understand, and experience differently as they read. No matter who wrote something, those surfing around the internet, the readers, will need to sort through to find what they want to read and how they will spend the hours in their day. Ultimately we think that a more qualitative internet can help build a more people-centric internet.

Talking of people…

Christopher has been recognised by AMEC, an international association for those in the media measurement and evaluation industry. He was named a Rising Star for his work with Overtone.

Overtone is participating in the EU-funded MediaFutures programme, with selected startups meeting up this week in Barcelona at the Catalan technology centre Eurecat. Pictured are Philip and Christopher sharing our project, focusing on harmful content, with the other groups.